- Products

-

Solutions

- Industrial & Commercial

- Aerospace & Defense

- Scientific Research

- Life & Health Sciences

- Semiconductor

- Laser Measurement

- Directed Energy

- Laser Measurement

- Laser Skin Treatment (IPL)

- Laser Surgery

- Medical Imaging

- Disinfection with UV Light

- Infrared Optics

- Fever Detection

- Laser Optics

- PCB Via Drilling

- Semiconductor

-

Resources

- Literature & Software

- Laser Measurement

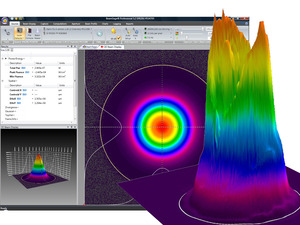

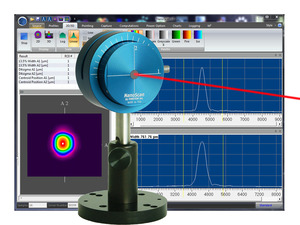

- Laser Beam Analysis

- IR Imaging Lenses

- Laser Optics

- LED Measurement

-

Support

- News & Press Releases

- Trade Shows & Events

- News & Press Releases

- ePulse Newsletters

- Ophir IR Optics Newsletter

- Webinars

- Service Returns & Calibration

- Returns & RMA Requests

- Calibration Service Centers

- Calibration Portal

- Photonics Product Support

- Policies & Certificates

- ISO Accreditation & Certificates

- Quality & Environmental Policy

- Volatility Statements

- Warranty Policy

- Terms of Sale

- Contact

- Sign In

- Orders

- en

-

Compare products

-

Product Comparison

Choose products to compare anywhere you see 'Add to Compare' or 'Compare' options displayed.

-

- Shopping cart items Shopping cart items

-

Ultra-High Velocity

Ultra-High Velocity